Everyone needs a tool to work. It just so happened that a reasonable person began to be called precisely from the moment the tool was used for any type of activity (the wording is lame, but in general it is). Actually, any musician, being a reasonable person, should be able to at least to some extent own a musical instrument. However, in the framework of this article, we will focus not on a musical instrument in the usual sense (guitar, piano, triangle …), but on an instrument that is further necessary for processing an audio signal. It’s about the sound interface.

Theoretical basis

We make a reservation right away, the sound interface, audio interface, sound card – as part of the presentation, are contextual synonyms. In general, a sound card is a subset of the sound interface. From the point of view of system analysis, an interface is something designed for the interaction of two or more systems. In our case, the systems can be something like this:

- sound recording device (microphone) – processing system (computer);

- processing system (computer) – a sound reproducing device (speakers, headphones);

- hybrids 1 and 2.

Formally, all that a simple person needs from the sound interface is to remove data from the recording device and give it to the computer, or vice versa, to take the data from the computer, sending it to the playback device. During the passage of the signal through the audio interface, a special signal conversion is performed so that the receiving side can process this signal in the future. The playback device (final) somehow reproduces an analog or sinus signal, which is expressed as a sound or elastic wave. A modern computer works with digital information, that is, information that is encoded in the form of a sequence of zeros and ones (in more precise language, in the form of signals of discrete bands of analog levels). Thus, the sound interface is subject to the obligation to convert the analog signal to digital and / or vice versa, which is actually the core of the sound interface: digital-to-analog and analog-to-digital converter (DAC and ADC or DAC and ADC, respectively), as well as the binding to in the form of a hardware codec, all kinds of filters, etc.

Modern PCs, laptops, tablets, smartphones, etc., as a rule, already have a built-in sound card, which allows you to record and play sounds, if you have recording and playback devices.

This is where one of the most frequently asked questions arises:

Can I use the built-in sound card to record and / or process sound?

The answer to this question is very ambiguous.

How does a sound card work?

Let’s figure out what happens with the signal that passes through the sound card. To begin with, let’s try to understand how a digital signal is converted to analog. As mentioned earlier, a DAC is used for this kind of conversion. We will not go into the jungle of hardware stuffing, considering various technologies and the element base, we simply denote on the fingers what is happening in the hardware.

So, we have a certain digital sequence, which is an audio signal for output to the device.

111111000011001001100101010100111111001100101000000110100001011101100110110001

00000001000110001010111110010100010010110011101111111110111001111001110010010

Here, the flowers mark coded small pieces of sound. One second of sound can be encoded with a different number of such pieces, the number of these pieces is determined by the sampling frequency, that is, if the sampling frequency is 44.1 kHz, then one second of sound will be divided into 44100 such pieces. The number of zeros and ones in one piece is determined by the sampling depth or quantization, or, simply, bit depth.

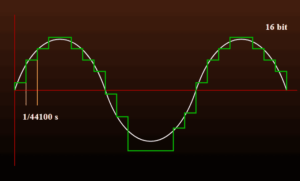

Now, to imagine how the DAC works, let’s recall the school geometry course. Imagine that time is the X axis, the level is Y. On the X axis, note the number of segments that will correspond to the sampling frequency, on the Y axis – 2n segments that will indicate the number of sampling levels, after which, gradually mark the points that will correspond specific sound levels.

It is worth noting that in reality, encoding according to the above principle will look like a broken line (orange graph), however, the so-called approximation to a sinusoid, or simply the approximation of the signal to the form of a sinusoid, which will lead to smoothing levels (blue graph).

— See also: VST 2 or VST 3 – what is the difference and which is better? —

The analog signal, which is obtained as a result of digital decoding, will look something like this. It is worth noting that the analog-to-digital conversion is performed exactly the opposite: every 1 / second sampling rate, the signal level is taken and encoded based on their sampling depth.

So, how the DACs and ADCs work out (more or less), now it’s worth considering what parameters affect the final signal.

The main parameters of the sound card

In reviewing the operation of the converters, we met with two main parameters, this is the frequency and sampling depth, we will consider them in more detail.

The sampling rate is, roughly, the number of time slices into which 1 second of sound is divided. Why is it so important for sound men to have a sound card that is capable of operating at a frequency higher than 40 kHz. This is due to the so-called Kotelnikov’s theorem (yes, again, mathematics). If it’s trivial, according to this theorem, under ideal conditions, an analog signal can be restored from a discrete (digital) arbitrarily accurately if the sampling frequency is more than 2 frequency ranges of this same analog signal . That is, if we are working with the sound that a person hears (~ 20 Hz – 20 kHz), then the sampling frequency will be (20 000 – 20) x2 ~ 40 000 Hz, hence the de facto standard 44.1 kHz, this is the sampling frequency to most accurately encode the signal plus a little more (this is, of course, exaggerated, since this standard is set by Sony and the reasons are much more prosaic). However, as mentioned earlier, this is in ideal conditions. Under ideal conditions, the following is understood: the signal must be infinitely extended in time and not have singularities in the form of zero spectral power or peak bursts of large amplitude. It goes without saying that a typical audio analog signal does not fit ideal conditions, since this signal is finite in time and has bursts and goes to “zero” (roughly speaking, it has time gaps).

The sampling depth or bit depth is the number of degrees of the number 2, which determines how many intervals the signal amplitude will be divided. A person, due to the imperfection of his sound apparatus, as a rule, feels comfortable in perception with a bit depth of at least 10 bits, that is, 1024 levels, a person is unlikely to somehow feel a further increase in bit depth, which cannot be said about technology.

As can be seen from the above, when converting a signal, the sound card makes certain “concessions”.

All this leads to the fact that the resulting signal will not exactly repeat the original.

Problems choosing a sound card

So, a sound engineer or musician (choose your own) bought a computer with a brand new OS, a cool processor, a large amount of RAM with a sound card built into the motherboard which is factory-installed, has outputs to provide a 5.1 sound system, the DAC-ADC has a sampling rate of 48 kHz (this is no longer 44.1 kHz!), 24-bit resolution, and so on and so forth … To celebrate, the engineer installs sound recording software and discovers that this sound card cannot simultaneously “take” sound, apply effects and is instantly instantaneous but reproduce. The sound may be very high-quality, but between the moment the instrument plays the note, the computer processes the signal and plays a certain amount of time, or, to put it simply, a lag occurs. It’s strange, because the consultant from Eldorado praised this computer so much, was crucified about a sound card and in general … and here … eh. With sorrow, the engineer goes back to the store, gives the purchased computer, pays an extra fabulous sum to return a computer with an even more powerful processor, a large amount of RAM, a sound card at 96 (!!!) kHz and 24 bit and … in the end, the same thing.

In fact, typical computers with typical built-in sound cards and stock drivers for them are not originally designed to process and reproduce sound in a mode close to real time, that is, they are not intended for VST-RTAS processing. The point here is not at all in the “basic” stuffing in the form of a processor-random access memory-hard drive, each of these components is capable of such a mode of operation, the problem is that this sound card, at times, simply cannot “work” in real time .

When any computer device is operating, so-called delays. This is expressed in the expectation by the processor of the data set that is necessary for processing. In addition, when developing both the operating system and drivers, as well as application software, programmers resort to the so-called the creation of the so-called software abstractions, this is when each higher layer of program code “hides” all the complexity of a lower level, providing at its level only the simplest interfaces. Sometimes tens of thousands of such levels of abstraction. This approach simplifies the development process, but increases the time it takes for the data to pass from the source to the recipient and vice versa.

In fact, lags can occur not only with built-in sound cards, but also with those that are connected via USB, WireFire (land for him to rest), PCI, etc.

To avoid this kind of lag, developers use workarounds that allow you to get rid of unnecessary abstractions and software transformations. One such solution is the beloved ASIO for Widows, JACK (not to be confused with the connector) for Linux, CoreAudio and AudioUnit for OSX. It is worth noting that OSX and Linux are doing fine without crutches like Windows. However, not every device is capable of operating at the required speed and required accuracy.

Let’s say that our engineer / musician belongs to the Kulibin category and was able to configure JACK / CoreAudio or make his sound card work with the ASIO driver of the folk craft company.

In the best case, this way our master reduced the lag from half a second to almost acceptable 100 ms. The problem of the last milliseconds lies in everything else and in the internal transmission of the signal. When the signal from the source passes through the USB or PCI interface to the central processor, the signal oversees the south bridge, which actually works with most peripherals and is directly subordinate to the central processor. Nevertheless, the central processor is an important and busy character, therefore, it does not always have time to process the sound right now, so our master will have to either accept that these 100 ms can “jump” for ± 50 ms if not more. The solution to this problem may be to purchase a sound card with its own chip for data processing or DSP (Digital Signal Processor).

— See also: The best VST plugins for vocals processing in 2018 —

As a rule, the majority of all “external” sound cards (the so-called gaming sound cards) have this kind of coprocessor, however, it is very inflexible for work and is intended essentially for “improving” the reproduced sound. Sound cards that were originally designed to process sound have a more adequate coprocessor, or, in the marginal version, such a coprocessor is sold separately. The advantage of using a coprocessor is the fact that if it is used, special software will process the signal with almost no central processor. The disadvantage of this approach can be the price, as well as the “sharpening” of equipment for working with special software.

Separately, I would like to note the interface between the sound card and the computer. The requirements here are quite acceptable: for a sufficiently high processing speed, interfaces such as USB 2.0, PCI will be enough. The audio signal is not really any large amount of data, such as a video signal, so the requirements are minimal. However, I will add a fly in the ointment: the USB protocol does not guarantee 100% delivery of information from the sender to the recipient.

The first problem was decided – big delays when using standard drivers or a big price for using a sound card with an adequate delay.

Earlier, we decided that achieving an ideal analog signal transmission is not such an easy task. In addition to this, it is worth mentioning the noise and errors that occur during the acquisition / conversion / transmission of a signal as data, since, if you recall the physics, any measuring device has its own error, and any algorithm has its accuracy.

A joke from the field of radio engineering: in radio communication there is a so-called Q-codes, three-letter designations of various questions and answers, used to reduce the number of transmitted characters. One of the unofficial codes “QZZ” stands for “Is it 60 Hz background or are you snoring?”, Here 60 Hz is the spurious background radiation of the AC frequency.

This joke is very indicative in view of the fact that the operation of the sound card is also affected by radiation from nearby equipment, up to the ultrasound emitted by the central processor during operation. To everything else, it is worth adding distortion to the characteristic of the recorded / reproduced signal that depends on the end device (microphone, pickup, speakers, headphones, etc.). Often for marketing, manufacturers of various sound devices deliberately increase the possible frequency of a recorded / reproduced signal, from which a person who has studied biology and physics at school has a very conscious question: “why if a person does not hear outside the 20-20 kHz range?”. As they say, in every truth there is some truth. Indeed, many manufacturers only on paper indicate better characteristics of their equipment. Nevertheless, if, nevertheless, the manufacturer really made a device that is capable of capturing / reproducing a signal in a slightly larger frequency range, it is worthwhile to think about buying this equipment at least for a while.

Here’s the thing. Everyone remembers what the frequency response, beautiful graphics with irregularities and other things. When making a sound (consider only this option), the microphone distorts it accordingly, which is characterized by irregularities in its frequency response within the range that it “hears”.

Thus, having a microphone that is capable of capturing a signal within standard limits (20-20k), we get distortion only in this range. As a rule, distortions obey the normal distribution (recall the theory of probability), with small intersperses of random errors. What will happen if, all other things being equal, we expand the range of the recorded signal? If we follow the logic, then the “cap” (the probability density graph) will stretch towards increasing the range, thereby shifting the distortion beyond the limits of the audible range of interest to us.

In practice, it all depends on the equipment developer and it should be checked very carefully. However, the fact remains.

If we return to our hardware, then, unfortunately, not everything is so rosy. Similar to the statements of the developers of microphones and speakers, the manufacturer of sound cards also often lie about the operating modes of their devices. Sometimes for a particular sound card you can see that it works in 96k / 24bit mode, although in reality it is all the same 48k / 16bit. Here, the situation may be that within the driver the sound can actually be encoded with the specified parameters, although in reality the sound card (DAC-ADC) cannot provide the necessary characteristics and simply discard the higher bits at the sampling depth and skip part of the frequencies at the sampling frequency. This at one time very often sinned the simplest built-in sound cards. And although, as we found out for human hearing, such parameters as 40k / 10bit are quite enough, this will not be enough for sound processing due to the introduced distortions during sound processing. That is, if an engineer or a musician made a sound using an average microphone or sound card, then in the future using even the best programs and hardware it will be very difficult to clear out all the noise and errors that were made during the recording phase. Fortunately, manufacturers of semi-professional or professional audio equipment do not sin like that.

— See also: 10 free VST synthesizers in your collection —

The last problem is that the built-in sound cards simply do not have enough necessary connectors to connect the necessary devices. In fact, even a gentleman’s set in the form of headphones, and a pair of monitors will simply have nowhere to connect, and you will have to forget about such frills as outputs with phantom power and separate controls for each channel.

Total: the first thing you need to determine for further selection of the type of sound card is what the master will do. It is likely that for roughing, when there is no need to record in high quality or to simulate the “ears” of the final listener, there may be enough built-in or external, but relatively cheap sound card. It can also be useful for beginner musicians, if they are not too lazy to deal with reducing delays in real-time processing. For masters who are engaged exclusively in offline processing, one should not bother to reduce delays and focus on devices that will actually give out the hertz and bits set by him. For this, it is not necessary to buy an overly expensive sound card; in the cheapest version, a more or less adequate “game” sound card may be suitable. BUT, I focus on the fact that drivers for such sound cards try to improve the sound in a certain way, which is unacceptable, because for processing it is necessary to get the sound as clean and balanced as possible with minimal implication of driver “enhancement”.

However, if you, as a master, need a device that meets the requirements for the quality of the recorded-reproduced signal, as well as for the processing speed of this signal, you will either have to pay extra by receiving an apparatus of the proper quality or choose 2 which you can donate: high quality, low price, high speed.

Note Ed .: If you are a musician, and do not want to understand all the complexities of modern processing – order mixing and mastering in our studio, and we will do everything necessary for you to get quality material! -> Prices